Former Biden aide Michael LaRosa expresses concern over Democratic support for Maine Senate candidate Graham Platner, deeming him unfit to challenge incumbent Susan Collins amid a growing scandal.

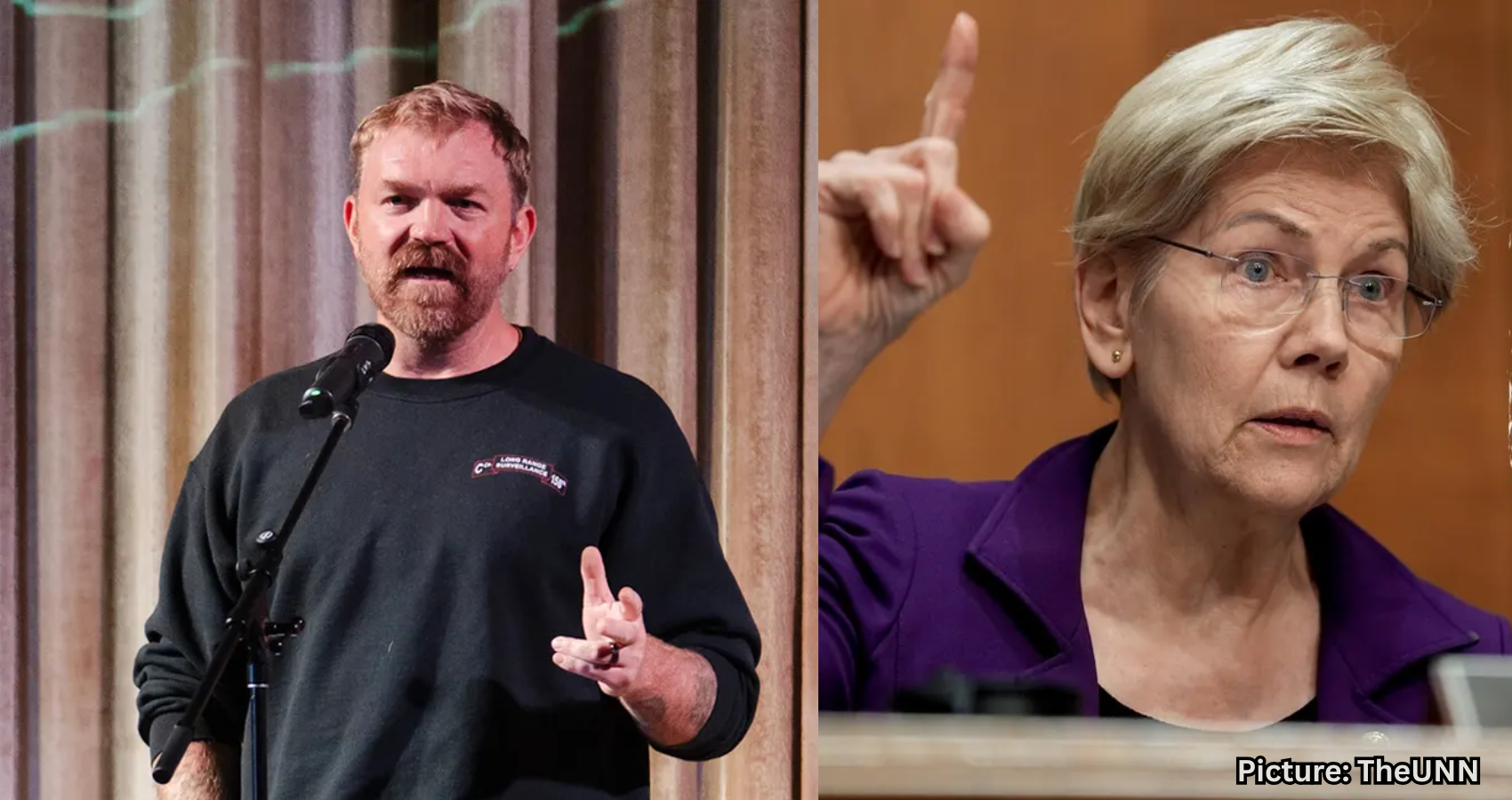

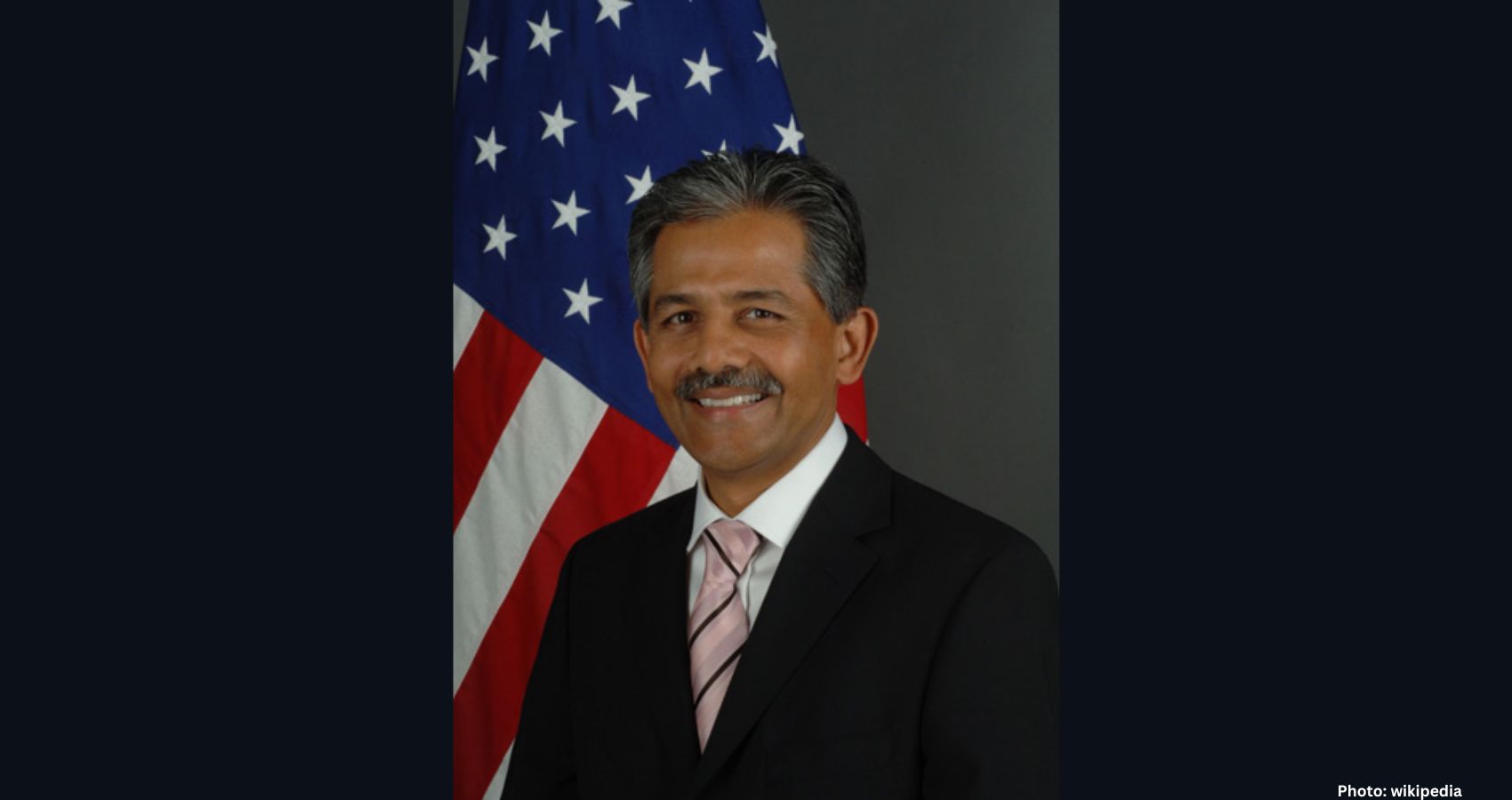

Michael LaRosa, former Press Secretary for First Lady Jill Biden, has voiced his alarm regarding the level of support that Graham Platner, a candidate for the Maine Senate seat, is receiving from the Democratic Party. LaRosa described the Platner campaign as indicative of a significant divide within the party.

“I am shocked at some of the people, some of the Democrats who I consider friends, being so all-or-nothing about this guy, and I don’t really understand why,” LaRosa told Fox News Digital. He expressed disbelief that Platner is being embraced by Democrats, stating, “He is not really representative of the values I would expect in a Democratic candidate, even by today’s standards.” LaRosa added that he is surprised by the number of individuals rallying around Platner in their quest to defeat incumbent Republican Senator Susan Collins.

Platner’s campaign has faced scrutiny due to resurfaced sexually explicit and vulgar online posts, including one that ridiculed a Purple Heart veteran who was shot multiple times by the Taliban. Additionally, a tattoo of a Nazi symbol on his chest has drawn criticism from across the political spectrum. Despite this backlash, Platner continues to lead in the polls.

LaRosa accused Democrats of ignoring serious concerns about Platner’s controversial past. “Democrats are playing a really dangerous game,” he remarked. “It’s really funny to me how selective and how short memories are in politics.” He emphasized that he personally draws the line at supporting “a Democrat who has Nazi tattoos,” asserting that Platner is “just not for me.”

While LaRosa acknowledged the desire to secure the Senate seat for Democrats, he stated, “I’m not willing to take anybody off the street to run just because they arouse some vibes in a few portions of the Democratic Party.” He expressed a preference for Collins, saying, “Susan Collins is much more my style than somebody who I consider kind of a performative economic populist like Graham Platner.” LaRosa pointed out that Platner attended elite private schools, which he feels contradicts his claims of being in touch with the average voter.

LaRosa believes that winning the election is “just not worth it” if it means supporting a candidate like Platner. “It’s his own behavior that disqualifies him,” he stated, referring to Platner’s history of rhetoric and advocacy for political violence, as well as his mockery of wounded U.S. soldiers. “Just because Platner is a Democrat does not mean he is qualified to serve in the U.S. Senate,” LaRosa added. “That does not make him a good candidate. It won’t make him a good senator. It just makes him a D. What’s the point in having a party if you don’t have standards anymore?”

Despite Platner’s strong polling numbers, LaRosa recalled his experience campaigning in 2020 with former Maine House Speaker Sara Gideon, who initially surged ahead of Collins in the polls before ultimately losing in a highly competitive race. “Susan Collins did not trail Sara Gideon in a single poll,” he noted. “Six years ago, our Democrat outpolled, outraised and outspent Susan Collins, and the state of Maine on Election Day chose both Joe Biden and Susan Collins by 9 points.”

Platner became the presumptive Democratic nominee after two-term Governor Janet Mills ended her campaign last month. LaRosa criticized the party’s current trajectory, suggesting that moderate positions, such as Senator John Fetterman’s support for Israel and criticism of the Democratic Party’s handling of border security, are now being used to purge candidates who do not align perfectly with the party’s leftward shift.

“We’re going to do to John Fetterman exactly what Trump is doing to candidates who opposed him or aren’t with him 100% of the time, and I don’t like it,” LaRosa said. He warned that Democrats could face a “major disappointment” and stated that he personally would not “publicly support, give money to, contribute to or work for” Platner.

LaRosa expressed concern that the Democratic Party believes Platner represents what voters outside of the Beltway want. “My party seems to think that this guy represents what the rest of America wants or what Maine voters want,” he said. “Democrats believe that Graham Platner seems to represent what people are yearning for and wanting outside of Manhattan and D.C.”

Ultimately, LaRosa emphasized that the decision now lies with the voters of Maine. “Maine now has the choice to decide if Platner will represent their values and their views and their anger and their frustrations,” he said. “They now have the opportunity to vote for him or Susan Collins, and we, the Democratic Party, have given and provided Maine that choice for them.”

Fox News Digital reached out to the Platner campaign for comment.